Data Integration¶

Overview¶

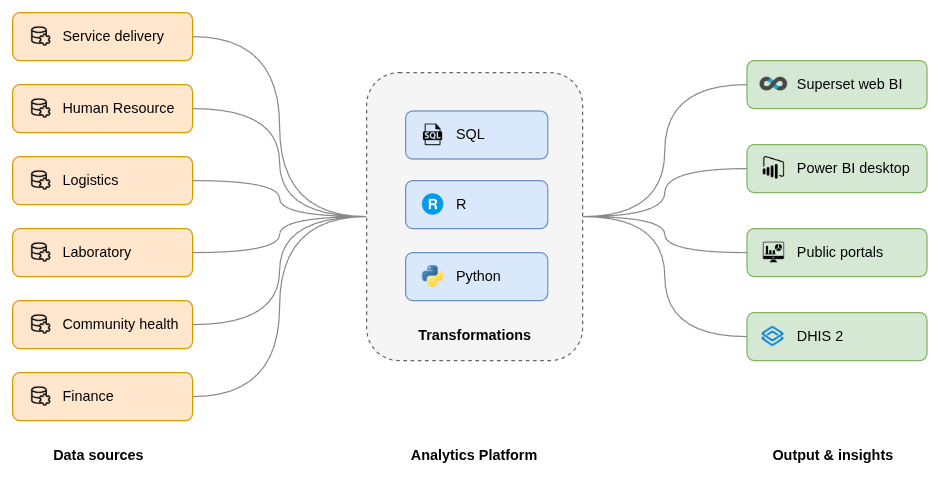

BAO Analytics Platform (AP) provides a comprehensive, modern framework for data integration, designed to simplify the journey from fragmented data sources to actionable insights. By moving away from traditional, rigid data architectures, AP enables organizations to ingest, transform, and analyze data at scale with minimal technical overhead.

The modern ELT philosophy¶

Traditional data management relied heavily on ETL (Extract, Transform, Load). This legacy approach required data to be pre-aggregated and filtered before reaching the data warehouse, often resulting in fragile, complex pipelines where even minor changes in the source system could cause a complete failure.

AP adopts the ELT (Extract, Load, Transform) approach, leveraging the high-performance processing power of modern columnar data warehouses like ClickHouse for data ingestion and transformation. With the ELT approach, raw data is loaded into the data storage and data warehouse in its native format, where it is processed and transformed with SQL views, Python, and R scripts. These are standardized languages that empower data engineers and scientists to perform scalable data manipulation, complex statistical modeling, and advanced analytics directly within the platform.

Benefits of ELT vs ETL¶

The ELT approach offers many benefits compared to ETL.

- Flexibility: By loading raw data first, organizations maintain a "single source of truth" that can be re-processed as business requirements evolve, rather than being locked into initial pre-aggregations.

- Scalability: ELT utilizes the parallel-processing capabilities of cloud and on-premise environments to handle massive datasets up to trillions of rows without requiring dedicated staging hardware.

- Reliability: Pipelines are less prone to failure because they are designed to automatically adapt to changes in source systems, such as the addition of new columns and tables.

- Familiarity: Data engineers can use the familiarity and knowledge they already have of the SQL, Phyton and R languages, without being constrained by rigid drag-and-drop user interfaces.

No-code data connectors¶

AP provides a library of no-code data connectors that eliminate the need for manual loading or custom ETL scripts. These connectors pull data from a wide variety of sources directly into the platform's scalable storage area.

AP provides a range of built-in no-code data pipelines.

- Applications: Integration with popular systems such as DHIS2, CommCare, Kobo Toolbox, ODK and iHRIS.

- Databases: Connections to popular databases such PostgreSQL, MySQL, MS SQL Server and Oracle.

- Cloud storage: Integration with Amazon S3, Azure Blob Storage and Google Cloud Platform cloud storage.

- Data files: Upload of data files in open formats such as CSV and Parquet.

These pipelines are pull-based and can be scheduled as part of workflwos for regular intervals or configured for near real-time (continuous) loading, ensuring that analytical data is never more than a few minutes behind the source system.

Data transformation and enrichment¶

Once data is loaded into the warehouse, AP offers powerful tools to join, merge, and enrich datasets. This transformation layer is critical for creating a unified view across disparate systems.

SQL views¶

Views act as virtual tables that allow users to manage data without moving it physically.

- Logical Views: Execute SQL queries in real-time for frequently changing data.

- Materialized Views: Store query results physically on disk to provide lightning-fast read access for large datasets.

Scripting with Python and R¶

For advanced analytics, such as predictive modeling, machine learning, and statistical computations, AP provides a web-based editor for Python and R. Data scientists can retrieve data directly from the warehouse, perform complex transformations, and write the results back into new tables.

AI-powered transformation¶

A key differentiator of the BAO Analytics Platform is the integration of AI and Large Language Models (LLMs) to bridge the gap between technical and non-technical users.

- Natural text to SQL: Within the Data Browser, users can describe the data they need in plain language. AP automatically translates these questions into complex SQL queries, enabling non-technical staff to perform advanced data retrieval.

- Natural text to script: The script editor allows users to generate Python or R code by describing the desired outcome, such as "perform outlier detection using Z-score", and having the AI-powered editor write the script automatically.

Data lineage¶

Data lineage refers to the process of tracking data's entire lifecycle, documenting its specific origin, the transformations it undergoes, and its destination to ensure transparency, quality, and trust. AP provides full data lineage through its approach to data loading, transformation and integration.

- Connectors: Datasets and data sources are ingested using no-code data pipelines (connectors) in a repeatable and automated way.

- Visibility: AP data loading is organized by workflows, consisting of multiple steps, each with multiple jobs. Job types exist for data pipelines, data quality checks, materialized views, scripts, and destinations. Comprehensive metadata including description and tags, in addition to the query and script code itself, are available for SQL views and scripts. Workflows can be configured and viewed through the AP user interface, and the data model and operations are exposed and accessible in the API. As a result, users have full visibility into the data journey and the data loading and transformation process.

- Change log: Workflows, as well as data pipelines, materialized views, data quality checks and destinations, provide a change log where key information about each run is stored. The information includes a unique job identifier, start time, count of affected rows, duration and status. In addition, detailed logs for each run are available, including informatiob about which tables were created and the row count for each table. This provides complete insight into the history of workflow activity.

Best practices for data integration¶

The AP architecture is built upon several core data engineering principles to ensure security and integrity.

- Unified Data Catalog: A centralized, searchable inventory of all data assets ensures teams can quickly find and access the right information.

- Automated Workflows: Complex, multi-step processes, from ingestion to transformation to quality checks, are orchestrated through a flexible workflow builder to ensure data consistency and freshness.

- Security by Design: All secrets (API keys, passwords) are encrypted using the Google Tink library, and data is protected both at rest and in transit via TLS/SSL.

- Auditability: Detailed change logs are maintained for every task, providing a transparent audit trail for data loading and transformation activities.